I got in a few rides these past few weeks, and some good cello time too, but my major focus has been on “infrastructure” projects:

Bike

The Santa Cruz, after four years of that “new bike feeling,” is starting to show some signs of age. Nothing bad, just things like shifting problems in the highest gears, so I might need new cables and maybe housing, and some trouble with the tire valves: I’ve got a slow leak in the rear tire caused by a torn o-ring, and a gummed up valve up front.

For the tires I got a “valve repair kit” from Saucon Valley Bikes. The tubeless tire valves are pretty easy to take apart and work with, so I was able to replace the rear o-ring — I can’t be sure if it worked perfectly, but it’s working enough for now — and have new valve innards on deck if the front tire becomes too annoying. The shifting seems sort of OK for the moment after I did some serious derailleur cleaning, but I can tell I’ll have to deal with those cables sooner rather than later.

Meantime, I noticed a slight creak coming from the bottom bracket…

SSL

For my website I’ve been using an SSL/TLS certificate from Let’s Encrypt, which I obtained using SSLForFree, since Let’s Encrypt is pretty difficult on its own. These certificates need to be renewed every 90 days, but when I went to do it the next-to-last time, I found that SSLForFree had been bought out by ZeroSSL, who use their own certificates and who intend to charge for anything beyond a limited number of free ones. I used them that time, but spent the next three months looking into a better option.

The ZeroSSL certificate expired a few days ago, but I had already replaced it with one from Let’s Encrypt, using a rather laborious process on yet another website. It’s very doable, but I think I’ll continue looking for a better method.

Towpath Amenities

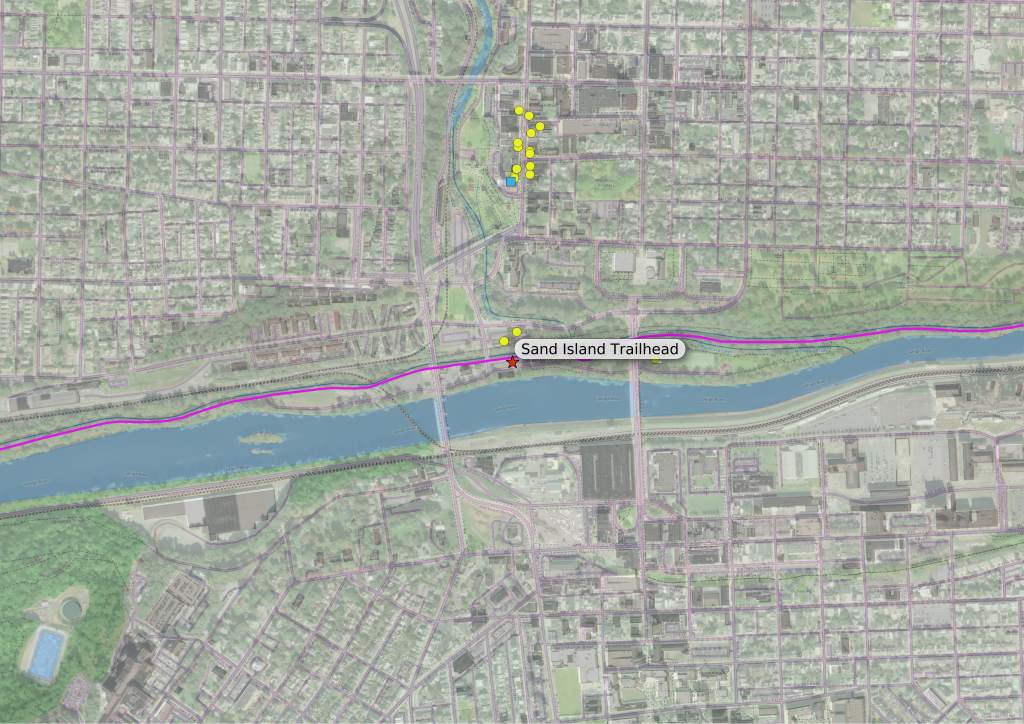

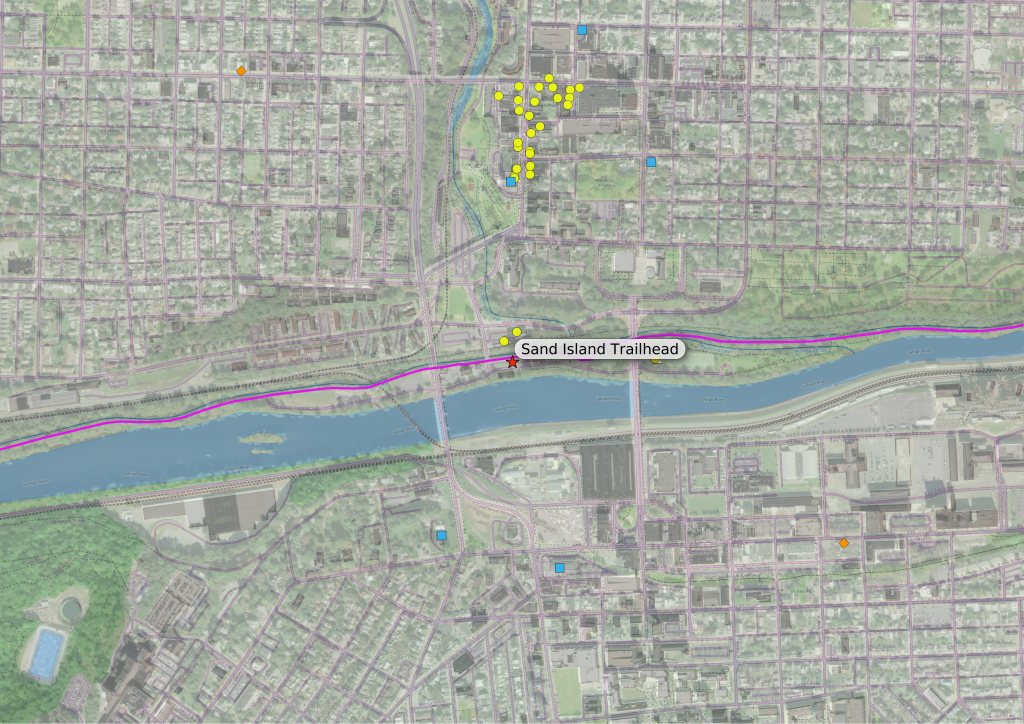

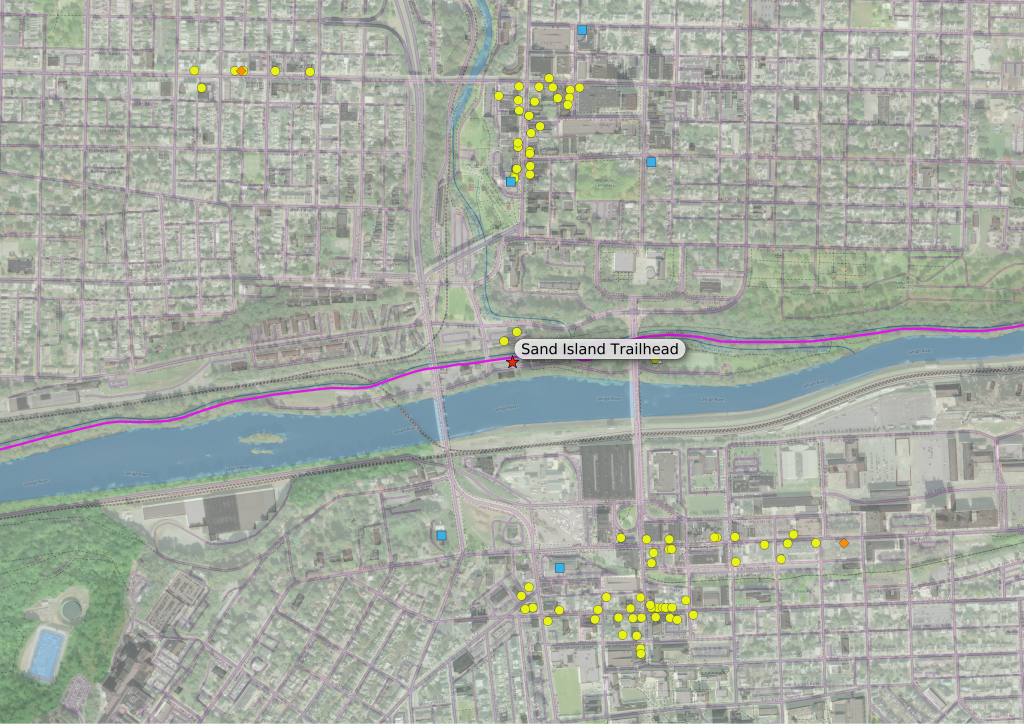

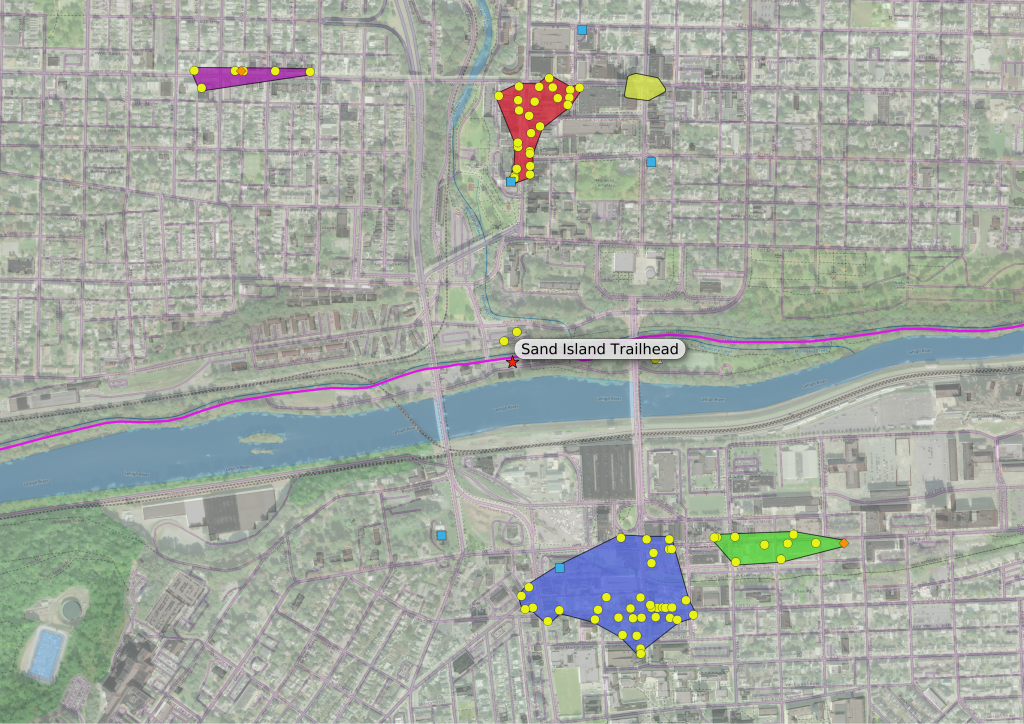

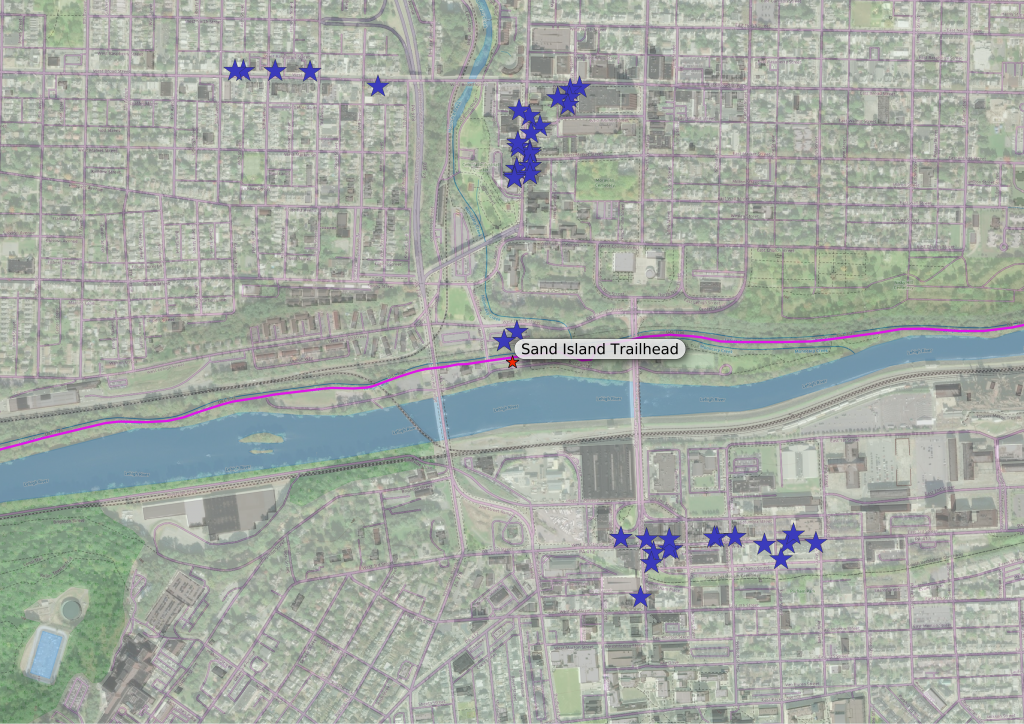

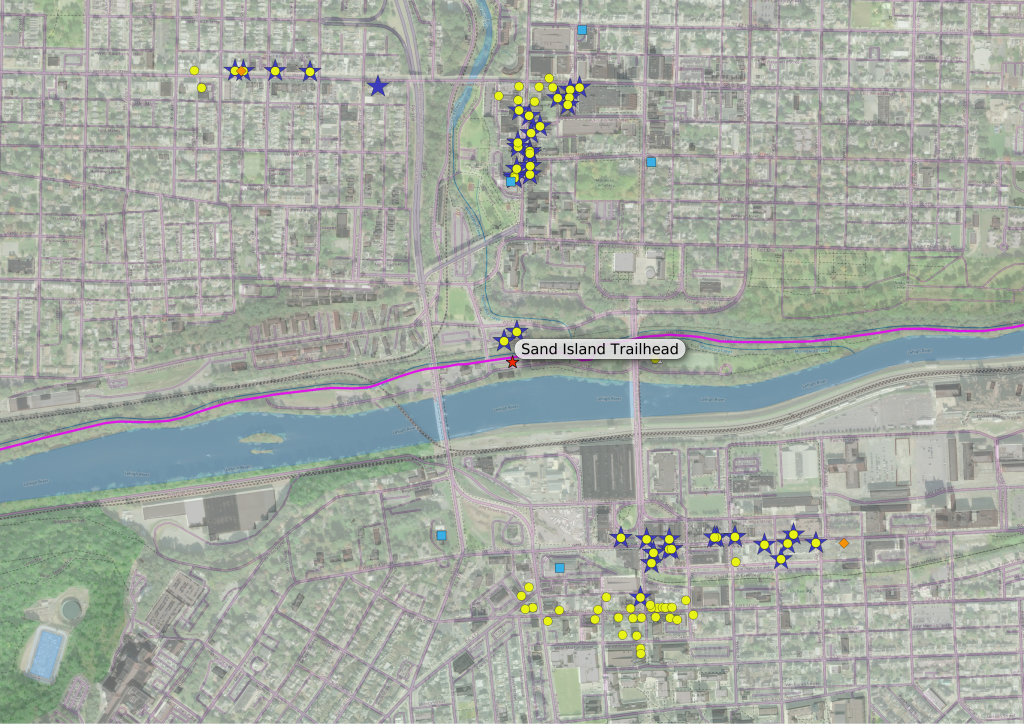

This is a bit of old news, but I’ve added the amenities and access points along the towpath between New Hope and Morrisville. I have about 10 miles left to add, the section from Morrisville to Bristol, and I have all access points and amenities I could find added to my database. All that’s left is to ground-truth some of the info, then I can update the map. This last addition will make the map complete, but that won’t make the job done — this job will never be done…

I started thinking about my method of routing the other day: the routine finds the point on the road network closest to my access point (the start) and the point on the network closest to my amenity (the endpoint), then finds the shortest path through the network between start and end points. But what if the start and end points on the network are not particularly close to their respective access or amenity points?

I originally assumed that this would not be an issue: access points were basically intersections of the D&L with the road network, and almost all amenities should be very near some road or path that customers use to get there. Then I figured out a way to check…

Most amenities were within about 25 yards of their route’s endpoint, the distance being mostly open space like a parking lot or driveway. I figured that this was acceptable, but I also found a few amenities that were more than that distance, between say 25 and 50 yards from their endpoints. Again they were on the far sides of parking lots and such from the ends of their routes, but these distances seemed a bit too large to leave be, so I added service lanes and driveways as necessary — I’m not sure why these weren’t already a part of the network, but they are there now; I updated the routes to the offending amenities and all was well.

There was a third group of amenities that I found, and these were the ones I had been worrying about: the ones where the database has a route, but in real life the route’s endpoint is nowhere near the amenity, and maybe the amenity isn’t even accessible from the endpoint. (One example could be a store along a roadway I’d deliberately excluded from the route network, such as a fast food place along a highway. The routing program would find a path to the closest point still on the allowed roads, and leave the cyclist to connect the endpoint and the amenity “as the crow flies,” crossing freeways or God-know-what, and I’m back to square one.)

Luckily, I only found a few of these, and they all were total outliers: places that were in the database, but were too distant and isolated to be considered “accessible.” For now I’m leaving them in the database, but I guess I’ll eventually have to remove them. I’ll have to look more carefully at the relationship between new amenities and the road network in the future if I add any more, to make sure they actually connect.

Network (the other kind)

One last piece of infrastructure activity: we are switching our internet provider, from DSL on Verizon to RCN cable. I bought a cable modem and a wifi router, and called RCN the other day; the cable installers should be here this afternoon.

I got us the slowest package, 10 Mbps, which is about four times faster than what we have now and costs about $20/mo less, before even considering the cost of the landline we’ll be abandoning when we get rid of Verizon. (If we need it we can upgrade our package, but we’ve been making do with DSL for so long that 10 Mbps will probably seem blazing fast.)