I use a script to help me with Wordle — creating the script, and watching it work (rather than the actual play itself), are what I like most about playing Wordle.

My script works, in conjunction with a text file of the most common five-letter words, by counting the most common letters used in whatever subset of words are valid at any point in the game — part of the script runs the text file through a regular expression filter to get that valid word list — then rating the words by how many high-count letters they contain. This especially is a good tool early in the game, since it helps to either confirm or eliminate the most obvious letter choices right off the bat, but it has diminishing returns with later choices.

I modified the script to similarly score multiple-letter combinations, but the results were mixed. Past a certain point, this approach really doesn’t do much, and adding letters didn’t change that. My average score hovered at basically just below four guesses per game…

The “wordle bot” assistant has its own way to assess words, based on a “divide and conquer” approach: the results of each word selection basically split the potential words into several groups, where all the words in each group would have produced the same result if they were the answer. (I call the groups “buckets,” and the approach the “bucket method.”) The more buckets a word choice can split the potential words into, and the fewer words are in each bucket, the better that word is for zeroing in on the correct answer.

I decided to try using this approach, writing a python script to do the work. It really was pretty easy, surprisingly so, and it worked really well so I added some sorting to it and incorporated it as an option into my original helper script.

The results, again, have been mixed. I think my average score is now closer to 3 than 4, but I’ve also had a whole lot more fives (and some more twos) so the variance seems to have grown. My “skill” score went up, but my “luck” score dropped… Part of this (especially the “luck” part) is because I’m not selecting words as randomly, or weighting the odds like my old method did, so I now start with my go-to choice of “SLATE” (always, for luck), then use my original wordle-helper method to find the next word, and from there I use the new bucket method. Better, but still not great.

It turns out, the new method is a bit more sensitive than the old one to unusable words in the word list. Wordle has a fairly large set of acceptable words (I think I saw somewhere that it was about 3000), but only a subset (say 2000?) can be winners, and none of them are plural (no ending in “S”), past tense verbs (mostly no ending in “ED”) and definitely no inappropriate words. There are may be other deal-breakers too, but that’s a pretty good set of exceptions. Meanwhile my potential word list, which comes in at over 5000 words, contains all of those and more.

So I’ve been curating my list. I added some regular expression filters to avoid words ending in “S” or “ED,” and marked as many inappropriate words as I could find so they would not get considered. My results are now much better, though there are still a few words that my list considers common but the NY Times does not.

Today’s score was a five, but I just had a long run of threes.

About my choice of “SLATE”

Even before I first wrote my wordle helper, I decided that “SLATE” was the best opening word, since it had what I thought were the most common letters, and the best combinations, to start the game. I’ve been using it ever since, and at some point I felt vindicated when the wordle-bot started using it as its own best starting word. Everyone else started using it too, but then the bot began to start with “PLATE” and my word became far less popular…

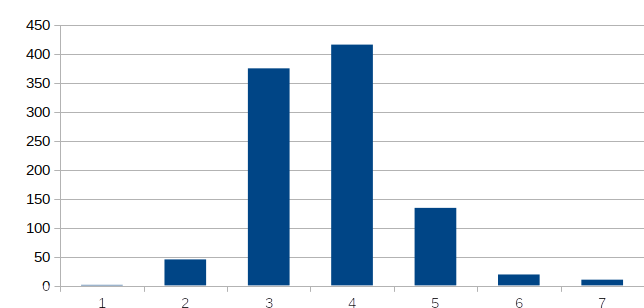

Well, I was playing with my new script, running it for a starting word (that is, with no restrictions except plurals, past tense and cusses), and the best choice by far was “SLATE.” I feel vindicated again, though I don’t yet know why “PLATE” is the current opener of choice for the bot.